BGP EVPN-based MCT cluster formation

Multi-Chassis Trunking (MCT) cluster formation leverages BGP EVPN Ethernet Segment and Auto-Discovery (AD) routes and employs proprietary mechanisms to avoid loops in the cluster in transient configuration scenarios.

No separate EVPN instance for MCT and non-MCT services exists. Therefore, to configure MCT even on a stand-alone MCT cluster, you need to configure EVPN.

Dependency on the EVPN instance

EVPN configuration is required (along with a BGP neighbor and an MCT cluster/client) to form an MCT cluster. The MCT cluster automatically becomes a member of the VLAN or bridge domain configured under EVPN. The following is an example configuration.

device(config)# cluster MCT1 device(config-cluster-MCT1)# evpn rd auto route-target both auto ignore-as vlan add 100,200,1000-4000 bridge-domain add 100,200,1000-4000

This configuration creates an EVPN configuration instance with auto RD and RT and adds a specified VLAN and bridge domain to the EVPN configuration.

BGP EVPN peering for the MCT cluster

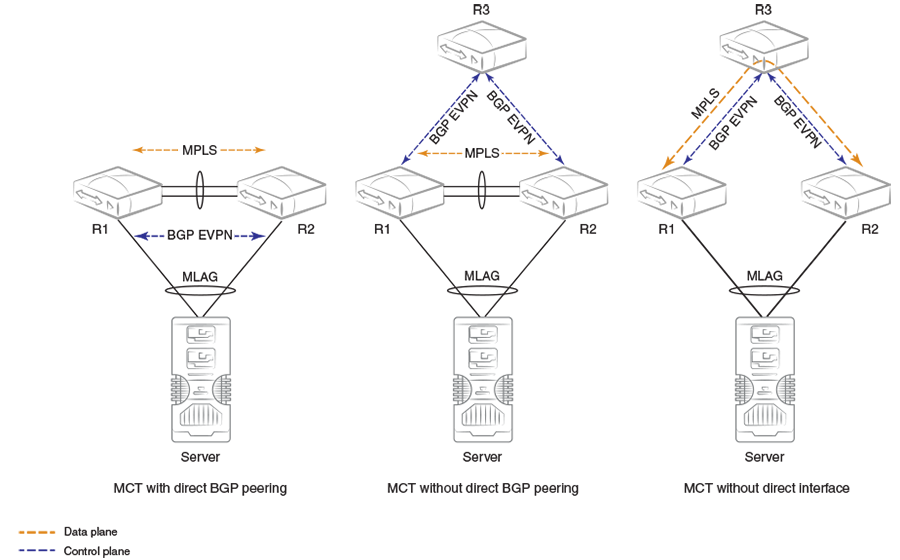

MCT cluster formation is controlled by BGP EVPN peering between the cluster members. The following figure illustrates typical MCT use cases.

BGP EVPN peering (not necessarily direct) between the MCT cluster members is required.

The first diagram shows a standalone MCT cluster and is the simplest MCT use case, where BGP EVPN peering exists only between the cluster members. An MPLS tunnel is established over the direct link (peer-interface), and the control and data planes are established over the same path.

The second diagram shows separate control and data plane paths. BGP EVPN peering is not direct between the cluster members. On SLX devices, the data plane is established using a VxLAN tunnel between MCT peers.

The third diagram shows MCT cluster formation without a direct link (peer interface). In this case, a multihop MPLS tunnel is established through intermediate routers.

The MCT cluster state is no longer dependent on BGP peering with the peer IP address specified under cluster configuration. Instead, the reachability of the BGP EVPN next-hop of the corresponding encapsulation is used for the cluster state.

Handling of ES/AD routes in BGP

ES and AD-per-ES routes are exchanged between MCT peers for Designated Forwarder (DF) election. The ES route is originated when the MCT cluster client is deployed. A set of AD-per-ES routes is originated when the cluster client interface is operationally up.

ES routes carry BGP ES-import route-target extended community, which is automatically constructed from the ESI value. ES-import RTs are automatically added in EVPN whenever a cluster client interface with an ES is configured. An ES route with an ESI value is imported only by those routers that have membership of that ES.

Similar to other EVPN routes, ES/AD routes are added to BGP RIB-in and are advertised to EVPN neighbors.

AD-per-ES routes are required to carry the route-targets of all EVIs that are members of the ES (cluster client interface). Because a large number of EVIs can be members of the ES (4K VLAN + BDs), the AD route may not fit in a single BGP update message, which is only 4K. Multiple AD-per-ES routes with unique RDs are generated for each ES as shown in the following table.

|

RD index for RD (Router-ID: RD-index) |

RTs of the EVIs attached to AD-per-ES route |

|---|---|

|

1 |

VLAN 1–127 |

|

2 |

VLAN 128–255 |

|

3 |

VLAN 256–383 |

|

. . . |

. . . |

|

32 |

VLAN 3968–4095 |

|

33 |

BD 1–127 |

|

34 |

BD 128–255 |

|

35 |

BD 256–383 |

|

… |

… |

AD-per-ES routes carry the ESI label in ESI label extended community for split-horizon filtering.