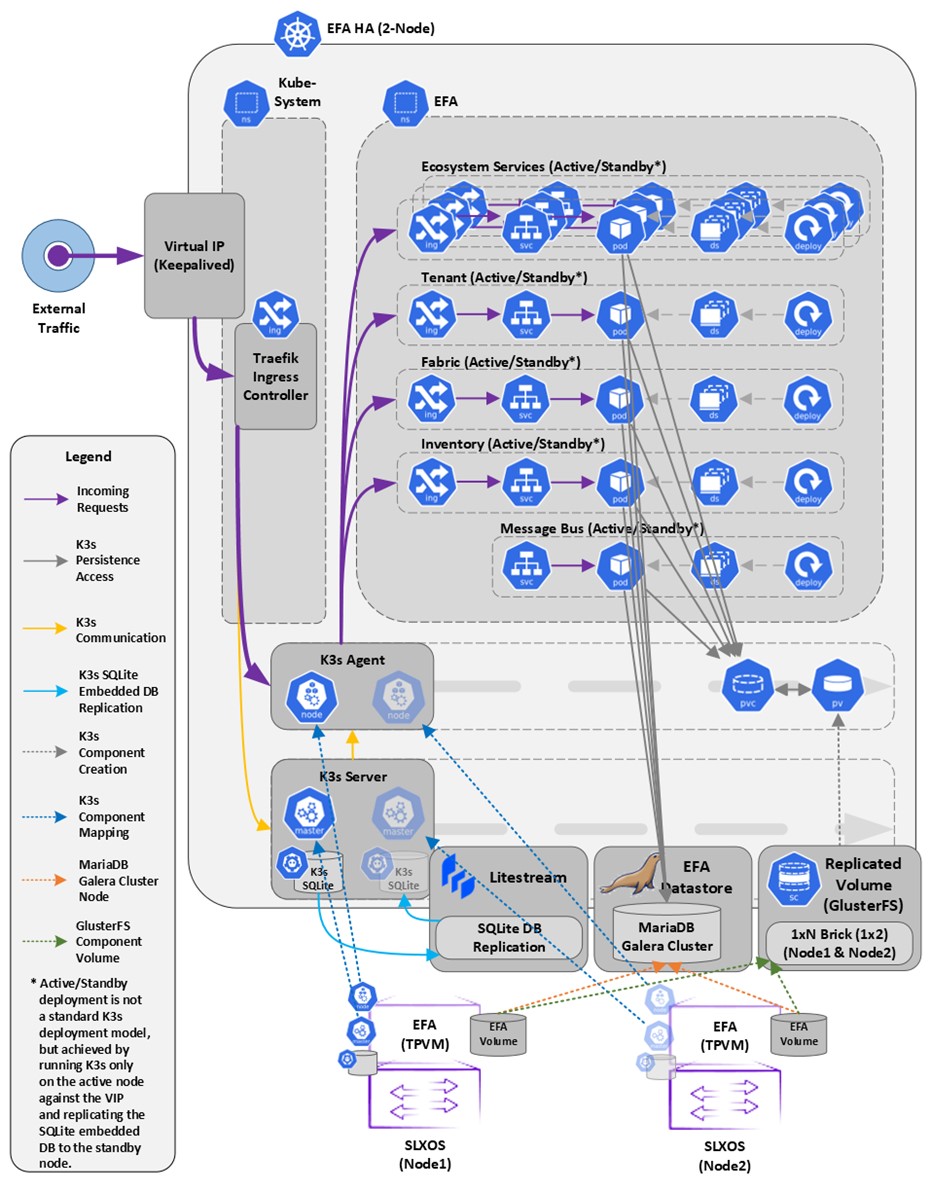

EFA Deployment for High Availability

You can deploy EFA in a two-node cluster for high availability.

Overview

A high-availability cluster is a group of servers that provide continuous up time, or at least minimum down time, for the applications on the servers in the group. If an application on one server fails, another server in the cluster maintains the availability of the application.

In the following diagram, EFA is deployed in the TPVM running on SLX-OS. The two EFA instances are clustered and configured with one IP address, so that clients need to reach only one endpoint. All EFA services are installed on each node. The node on which EFA is installed is the active node and processes all requests. The other node is the standby. The standby node runs processes all the requests when the active node fails.

All operations provided by EFA services must be idempotent, meaning they produce the same result for multiple identical requests or operations. For more information, see the "Idempotency" section of the Extreme Fabric Automation Administration Guide, 3.1.0 .

EFA uses the following services to implement an HA deployment:

- Keepalived (VRRP) – It is a program which runs on both nodes. The active node frequently sends VRRP packets to the standby node. If the active node stops sending the packets, keepalived on the standby assume the active role. Thus, the standby node becomes the active node. Each state change runs a keepalived notify script containing logic to ensure EFA‘s continued operation after a failure. With a two-node cluster, a ”split-brain” may occur due to a network partition which leads to two active nodes. When the network recovers, VRRP establishes a single active node that determines the state of EFA.

- K3s server runs on the active node. Kubernetes state is stored in SQLite and is synced in real-time to the standby node using a dedicated daemon, litestream. On a failover, the keepalive notify script on the new active node reconstructs the Kubernetes SQLite DB from the synced state and starts the k3s. K3s runs on one node at a time, not on both nodes and hence the HA cluster looks like a single-node cluster, however, the HA cluster ties itself to the keepalived-managed virtual IP.

- MariaDB and Galera – EFA business states (device, fabric, and tenant registrations and configuration) are stored in a set of databases managed by MariaDB. Both the nodes run a MariaDB server, and the Galera clustering technology is used to keep the business state in sync on both the nodes during normal operation.

- Glusterfs – This is a clustering filesystem used to store EFA‘s log files, various certificates, and subinterface definitions. A daemon runs on both the nodes which seamlessly syncs several directories.

Note

Although Kubernetes run as a single-node cluster tied to the virtual IP, EFA CLIs still operate correctly when they are run from active or standby node. Commands are converted to REST and issued over HTTPS to the ingress controller via the virtual IP tied to the active node.

The efa status confirms the following:

- For the active node:

- All enabled EFA services are reporting Ready

- Kubernetes state is consistent with all the enabled EFA services (for example, service endpoints exist)

- The host is a member of the Galera or MariaDB cluster

- For the standby node:

- It is reachable via SSH from the active node

- It is a member of the Galera or MariaDB cluster

- For both the nodes:

- The Galera cluster size is 2 if both the nodes are up, and the cluster size is >= 1 if the standby node is down.

Example

Example:NH-1# show efa status

===================================================

EFA version details

===================================================

Version : 3.1.0

Build: 109

Time Stamp: 22-10-25:12:45:44

Mode: Secure

Deployment Type: multi-node

Deployment Platform: TPVM

Deployment Suite: Fabric Automation

Virtual IP: 10.20.246.103

Node IPs: 10.20.246.101,10.20.246.102

--- Time Elapsed: 8.512402ms ---

===================================================

EFA Status

===================================================

+-----------+---------+--------+---------------+

| Node Name | Role | Status | IP |

+-----------+---------+--------+---------------+

| tpvm2 | active | up | 10.20.246.102 |

+-----------+---------+--------+---------------+

| tpvm | standby | up | 10.20.246.101 |

+-----------+---------+--------+---------------+

--- Time Elapsed: 19.168973841s --